#082: Superweek 2018 - GDPR, Machine Learning, and Tim's Hair

For the second year in a row for the podcast — but the first appearance since Moe joined the crew — we headed to the Hunguest Grandhotel Galya outside Budapest for Superweek, one of the most unique conference experiences in the digital analytics industry: comfortably isolated over an hour outside of Budapest in a beautiful setting, it’s a temporary community of, for, and by the analyst. With sessions ranging from GDPR to machine learning to attribution to media analytics, the spaces before, between, and after the presentations were extended discussions with great people on a wide range of topics. The “fireside chat” on Wednesday evening was a recording of the podcast with a live audience, where we had attendees to share tips and ideas that we found particularly intriguing. And had quite a bit of fun along the way.

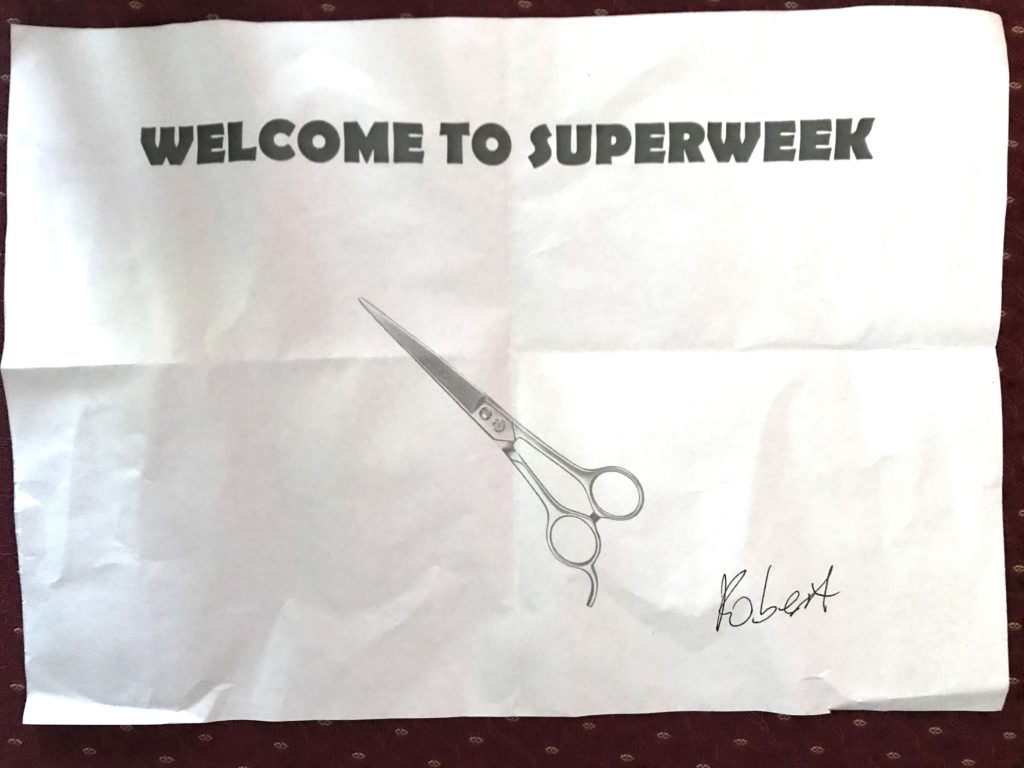

And, somehow, the length of Tim’s hair became a meme. This picture is referenced in the show:

Attendees Participating

In order of appearance, the participants in the show were:

- Prolet Miteva, Autodesk

- Jim Sterne, Rising Media (and more!)

- Hussain Mehmood, Marketlytics

- Matt Gershoff, Conductrics

- Martijn Scheijbeler, Postmates

- Peter Meyer, Nordea

- Robert Petković, Bruketa&Žinić&Grey

- Raphael Calgaro, Ig2

- Mariia Bocheva, OWOX BI

- Charles Farina, Analytics Pros

- Yehoshua Coren, Analytics Ninja LLC

- Krista Seiden, Google

- Erik Driessen, Greenhouse Group BV

- Pawel Kapuscinski, Zalando SE

References Made in the Show

- Zoltán (Zoli) Bánóczy

- GDPR

- Artificial Intelligence for Marketing: Practical Applications (book by Jim Sterne)

- Kayleigh Rogers

- Thong — the definition Moe DIDN’T mean

- Thong — the definition Moe DID mean

- Conductrics

- #mattgershoffed

- All models are wrong, but some models are useful.

- Science and Statistics (George Box paper in the Journal of the American Statistical Association)

- Francis Bacon

- David Hume

- Simo Ahava

- Tealium

- Lee Isensee

- The Measure Slack Team (join link)

- MongoDB

- Mixpanel

- Stéphane Hamel

- Steen Rasmussen

- OWOX BI Pipeline’s BigQuery Connector

- Analytics Pros

- Krista Seiden

- @kegalytics

- Pintro Tunes – Ruben Mak and Erik Driessen’s people tracking solution

- DAPH transcript mining using R

Video of the Event

If you like to watch your podcasts, the Superweek team made that happen!

Some Pictures from the Recording

Courtesy of the Superweek A/V team (click to view a larger version):

Episode Transcript

[music]

00:04 Speaker 1: Welcome to the Digital Analytics Power Hour. Tim, Michael, Moe, and the occasional guest, discussing digital analytics issues of the day. Find them on Facebook at Facebook.com/AnalyticsHour and their website AnalyticsHour.io. And now, the Digital Analytics Power Hour.

[music]

00:27 Michael Helbling: Hi, everyone. Welcome to the Digital Analytics Power Hour.

[applause]

00:37 MH: This is episode 82. On the advice of our legal team, in accordance with Section 23 of the GDPR, by remaining in the vicinity, you agree to let some of your personal data be possibly recorded by the Digital Analytics Power Hour, GMBH LLC Inc. Please clap to signal your consent. And welcome to our show with a live audience at Superweek!

[applause]

01:02 MH: Now, with the legalese out of the way, for the second year in a row, we are so honored and pleased to join an amazing group of people, high in the Hungarian mountains. And as always, I’m joined by my two co-hosts. Moe Kiss, hailing from The Iconic, from Sydney, Australia. Welcome to the show.

[applause]

01:28 Moe Kiss: Thank you.

[applause]

01:33 MH: And Tim Wilson, my long time partner in crime.

[applause]

01:38 MH: Welcome to the show. And as always, I am Michael Helbling, from Atlanta, Georgia, at Search Discovery. Alright. [chuckle] We didn’t get a chance to compete in the Golden Punchcard, but we believe we’ve come up with an exciting new measurement capability. We’re calling it last clap attribution.

[laughter]

02:02 MH: Okay. By clapping…

[applause]

02:06 MH: Okay, hold on. Please. Data quality’s important to us.

[laughter]

02:10 MH: By clapping, how many are here at Superweek for the very first time?

[applause]

02:17 MH: Yes! Welcome. By clapping, how many here are sober right now?

[laughter]

02:31 MH: Alright. By clapping, how many have come to Superweek because they heard about it on the Digital Analytics Power Hour?

[applause]

02:40 MH: Okay. Thank you. We are so glad that you’re here to be part of what we experience and are so excited about. So you’re all welcome. Thank you for having us. Let’s get this great show started. Now, we’ve been listening to the talks. We’ve been hanging out with everyone. We’ve got lots of questions. We’ve got a great guest tonight. It’s all of you. Welcome to the show! Let’s get things started.

[applause]

03:13 MH: Alright. Who’s got our first… Oh, I’ve got our first question. So normally our first question of the night would go to the person who conceived of this idea in the first place, none other than Zoley. However, he doesn’t like to be in the spotlight. He likes to stay in the background.

[laughter]

03:34 MH: Oh. [chuckle] He told us he doesn’t like to be in the spotlight, so we said, “Well, we can answer this question for you, Zoley, on your behalf.” I wanna ask all of you, how much do we love Zoley?

[applause]

03:52 S?: Zoley! Zoley! Zoley! Zoley!

03:57 MH: Alright, let’s get it going. Who’s got a question? Moe, you’ve got a question for somebody.

04:02 MK: I do have a question. So the question that I actually wanna start with is, there’s been a few hot topics here at Superweek, and none other than GDPR. So the first question is actually for Prolet. She’s here from San Francisco and gave a really great talk on GDPR. And there was one particular point that I wanted to follow up on. In her slide, she talked about the fact that it might be worth… Like one of the key things that you should take away, is separating your operational data from your analytics data. So over to you Prolet.

04:36 Prolet Miteva: Thank you. So first…

[applause]

04:41 PM: I’m not sure what you guys think I’ll say, but I’m not a lawyer.

[laughter]

04:47 PM: With that out of the way… One thing that is important with GDPR is that you not only have to think about how are you collecting your data, but how and for what purposes you’re gonna use it. So just because you collected it, it doesn’t mean you have the right to use it for all purposes. So if you need the data in order to make your site function, it doesn’t mean that you can actually use it in your marketing materials. So if you separate how you collect your data for operational purposes, and how you’re collecting it for analytical purposes, or any other maybe models that you’re creating, you are much more likely to be GDPR compliant and not get in trouble. Because you have to remember that not all of your developers and not everyone that uses your data, would completely understand how GDPR and permissions work. So the more you separate that and the more you have control over permissions, you’ll be in a better place.

05:48 MK: So just to follow up, have I got it correct… So operational data would kind of sit in a different sphere and therefore be subject to slightly different rules? Is that the key benefit?

06:02 PM: That and also how you save the permissions. So if you save the permissions in your analytical data separately, you would know what exactly you have the right to use the data for, versus when you’re just using your operational data to display to your user. For example, how many videos they played on your platform. That will be now completely separated, and you would know that you don’t have the right to access the data. Because the other part is, depending how you go into a consent and depending on your company’s risk taking, you might have to ask for permission for each one of the uses. And putting that in your operational data can get really messy. So it might result in you duplicating data across in multiple platforms, but you might be safer at the end.

07:00 MK: Okay, thanks for that.

07:00 Tim Wilson: Awesome! So GDPR, that’s the last we’re gonna talk about GDPR.

07:05 S?: Yay!

[applause]

07:06 TW: No. It’ll be back. But for especially the first couple of days, the two big topics were GDPR. And what was the other topic? Everyone together.

07:16 S?: Machine learning.

07:17 TW: Machine learning.

07:18 S?: Artificial intelligence.

07:19 TW: Machine learning and AI. So it was kind of a which one’s which. So we’re gonna hit on that other big question, and we’re gonna go to Mr. Jim Sterne. And so…

[applause]

07:31 S?: Yay, Jim!

[applause]

07:35 TW: And Jim Sterne, for anyone who doesn’t know, recently published his latest book of, I think it was like his 273rd book. I don’t know that my data integrity is quite right. It’s close to that. [chuckle] But that was Artificial Intelligence for Marketing: Practical Applications, which I actually can recommend ’cause I have read the whole thing.

07:53 Jim Sterne: You’re the one.

07:54 S?: Whoo!

07:54 TW: I’m the one. [chuckle]

[applause]

07:55 TW: Somebody had to. So the question is, what is an application of AI that you find yourself relaying pretty often? And when you’re talking to a marketer who’s like, “I hear about it, but is that something I need to be thinking or considering or worrying about? Or is that something way, way off in the future that I can kind of ignore for now?”

08:18 JS: Yeah, forget about it.

08:19 TW: Okay, thanks.

08:20 JS: Yeah. Next question.

[laughter]

08:21 JS: Next.

[chuckle]

08:22 JS: So I think the thing that I always reach for is email marketing, because it has enough data and enough repetition and a fast enough speed that people can apply how the machine thinks about it. If I send out an email blast and look at the results, and I think about how I could make improvements. And it didn’t really get a great open rate, or it did open but nobody clicked through, or clicked through but it didn’t convert, whatever it is. And I tweak it a little bit and send it out again and measure the results. Now, that’s like a two or three-week long process, because I’ve gotta send it through committee and send it to the agency, and get the technical people to tag it again and get it out there. But the machine can send out the email and look at the results as they come in and update its mathematical model of what’s important. It will look to see, is it the headline that’s the most important thing, or should I change the color of the button, or should I change the landing page. And in fact, it can test all that stuff, and it’s just trial and error. And it learns over time. So while it will take me several weeks to go through an iteration, it can be constantly mailing and updating and improving and learning.

09:38 TW: Awesome! So, Michael, Moe, once we get around to getting an email subscription list for the Digital Analytics Power Hour, we’ll be diving right into that.

09:47 MK: That’s one of my KPIs [09:48] ____.

09:48 TW: Although we do need some volume of data, I guess, before you can actually really effectively do that.

09:51 MH: Yeah. Big data.

09:53 JS: That would be absolutely necessary.

09:54 TW: Alright, just having our parents having subscribe, that’s probably not enough. That’s not good data. Awesome. Thanks, Jim.

10:00 MH: Alright. Okay.

[applause]

10:04 MH: Now…

10:05 MK: So for our next question, we have something quite important.

10:08 MH: Yeah. We’re gonna… We do?

10:11 MK: Yes.

10:11 MH: Oh, yes. So before Moe asks the next question, we go out to all of you. But there’s someone who has set the standard for what it means to answer a question on this kind of podcast in an exceptional way. If some of you who were here last year remember Kaylee Rogers from the UK, did such an amazing job answering a question. And so we have for “The Best Answer to a Question,” open to the audience, the Kaylee Rogers Commemorative Prize, that Moe brought all the way from Australia. Oh, yeah.

[laughter]

10:51 MH: So I know Kaylee’s not here to do a repeat win, but maybe she’ll hear it from some of her friends or if she listens to the show. But Moe’s got a question. We want an answer from somebody out there, it could be anyone. But be aware, the stakes are high.

[laughter]

11:09 S?: 42.

11:10 MH: Yeah. 42. Yeah. But what’s the question? [chuckle]

11:13 MK: So for those of you who are listening and obviously can’t see me running around the room with a… I’m gonna say the word “thong,” ’cause all the Americans are gonna freak out, which means a flip-flop. It is a very handy Australian bar magnet. So for whoever’s got the best comment, question, etcetera, throughout the show, this super expensive… I promise it wasn’t made in China, bottle opener.

11:42 TW: I feel like we should have a contest for people who’ve just heard that description who aren’t here, actually tweet what they think she was just describing. And at some point, we’ll put a picture of it.

[laughter]

11:54 TW: Okay. So who’s ready to answer a question? Who’s got what it takes? We start easy and then we escalate, rapidly.

[applause]

12:03 TW: Hussain! Whoo! Alright. Moe, you’ve got the question.

12:10 MK: Oh, I do have the…

12:11 TW: Yeah.

12:12 MK: Okay.

12:13 TW: Yeah, I’ll get you the microphone.

[chuckle]

12:16 MK: So the question that I have is, from the week so far, I’ve actually been asking this question to people throughout the week over meal times, ’cause it’s my favorite thing to ask. What has been your favorite highlight moment, something that you just think, “Yeah, that was unreal”?

12:33 Hussain Mehmood: So I think my favorite has been just something that Tim did in his presentation. So he…

[laughter]

12:42 HM: Yeah, yeah. This was it, this was it, yeah. So this was just flipping the chart, and kind of doing it horizontally, and then having so much space for comparison. That was very interesting because I had been thinking of it like, where would I place all this and how would I compare it? And just having that light bulb was quite amazing, to be able to do that.

13:05 S?: Distribution!

13:06 TW: We have a winner! Oh wait. No, we’re supposed to wait.

[overlapping conversation]

[laughter]

13:09 MH: Oh. Yeah, wait.

[laughter]

[background conversation]

13:14 TW: Awesome. Thank you.

13:14 MK: Thank you [13:15] ____.

13:15 MH: Very nice. Thank you.

13:16 TW: I’m done. We’ve drop the… So I’ve been standing here, I saw him earlier. Where is the man who has had a hashtag created for him? Oh, there he… Here he comes.

13:27 MH: There he is!

13:28 S?: [13:28] ____ in the corner.

13:29 TW: Okay. We have Matt Gershoff.

13:31 Matt Gershoff: Hello.

13:32 TW: And from Conductrics, all the way from New York City.

[applause]

13:37 MH: New York City.

13:38 MG: Thanks for having me.

13:39 TW: And I do recommend anyone who wants to, to go and do a Twitter search for hashtag #MattGershoffed.

13:46 MG: Please don’t.

[laughter]

13:46 TW: ‘Cause I think everyone who’s here has experienced it. And…

13:51 MG: Really, don’t do that.

13:51 TW: It’s a wonderful experience.

13:54 S?: Let’s make it trend.

13:55 MG: No one needs to do that.

[laughter]

13:58 TW: So one of the things Matt had in his session, and you and I, Matt, we were talking about it afterwards, was a somewhat famous George Box quote, which was, “All models are wrong, but some are useful.” And I immediately saw it and thought, oh, that’s this kind of throwaway thing, that yes, the data’s not perfect. And Matt and I were talking later, and I said, “Yeah, but I’ve started to realize there’s a more kind of profound… That really is a good point.” And Matt was like, “Yes! Exactly. I’ve realized that there really is a meaningful point in that quote ‘All models are wrong, but some are useful.’” So, Matt, can you explain the profundity in that quote?

14:37 MG: Sure. So actually it’s not, it’s actually technically… I don’t believe it’s the quote that he used. Actually he wrote a paper in 1976 that I read… Actually it’s a really good paper about statistics and science in general.

14:51 MH: Nice try, [14:51] ____.

14:52 TW: Why don’t you just recap…

[overlapping conversation]

14:54 TW: Why don’t you kinda do a rundown of that paper instead, for everyone?

[laughter]

14:56 MG: Actually, you know what? Well, as a matter of fact, Tim, I’m glad you asked me to do that because I have the paper right here.

[laughter]

15:01 MG: And I’m gonna read from it, because I think this actually gets to the main point of it. And there’s a section called “Worryingly Selectively.” So to worry selectively. And it says, “Since all models are wrong, the scientist must be alert to what is importantly wrong. It is inappropriate to be concerned about mice, when there are tigers abroad.”

[laughter]

15:22 TW: Ooh.

15:23 MG: And if that doesn’t explain that… And I think that… We can all pause. That’s actually the quote that… From George Box. And I think the point is, is that… And actually this is a nod to Bacon and to Hume.

15:38 TW: Of course it’s to Hume.

15:39 MH: Oh, yeah.

[overlapping conversation]

15:40 MG: The idea is that truth is the wrong metric. And so I brought it up mostly not as a throw away, but just to liberate us. Because sometimes we get concerned about getting it right or wrong, and really, right or wrong is really the wrong metrics. Because it’s always gonna be wrong. And it’s really about utility. And so it’s really up for us, the designer, as the scientist or the analyst, we’re the designer of the analysis, and really what we’re trying to do is to try to… It’s really about utility. So how useful is this model? And that’s really for us to decide. So it’s not to let someone say, “You’re doing it wrong,” it’s, “As long as this is useful, you’re good.”

16:18 TW: And useful being, if it’s better than a coin flip and cost effective? So there was another point that you made around the usefulness of, do you always want the best model, or are there trade-offs we should be thinking about?

16:34 MG: Yes, you always want the best model, but the point is, is the best is gonna based upon all these factors…

16:39 TW: The most predictive, most accurate model?

16:41 MG: Most accurate, yeah.

16:42 TW: No, absolutely not, right?

16:42 MG: No, accuracy… Yeah, there’s this trade-off between accuracy… And in this case, I’m gonna bring up GDPR, even thought we didn’t want to. But with interpretability. And so there is some utility sometimes to being able to interpret a model, one, to be able to debug it. And so it’s important to have this trade-off. You don’t wanna be obsessed with accuracy, when you might have some other objectives, in which something less complex will have greater use for your use case.

17:08 TW: So if you’ve got deep learning that gives you a 90% perfect answer, but it’s a total black box, or you have a simple regression that gives you 85%, you may say, “Hey, I can understand the regression, ‘I made the best model’ might be the one that is… “

17:24 MG: Yeah, and you can explain it to the client or to somebody else. So that’s why it’s really about [17:28] ____ utility. So you’re gonna factor in all the aspects of the result, and then it’ll be up to you to decide what is optimal, with respect to your objectives.

17:38 TW: Alright. So all of our listeners have now officially been, let’s all say it together.

17:42 S?: #MattGershoffed.

17:45 MH: Thank you, Matt.

17:46 MG: Thanks, guys.

17:48 MH: Oh yeah. Forever, it’s gonna go forever.

[background conversation]

17:52 TW: Alright.

17:53 MK: So the next question is from Martijn. And this question… So, Martijn enlightened us all a little bit to the world of SEO. And it sounds like we’re all interested in doing A/B tests, but it sounds like A/B testing, from an SEO perspective, has its own unique characteristics. I’m keen to hear a little bit about those.

18:14 Martijn Scheijbeler: Oh, absolutely. So mostly what you focus on with regular A/B testing, website experimentation, is that it’s all about the user metrics. You’re really focused on conversion rate, improving your website flow, usually it’s all about funnels. But for SEO and SEO experimentation, it’s a little different, because it’s all about traffic numbers, like basically getting more easily sessions from organic search. So what you focus on mostly with SEO experimentation is, instead of bucketing users, you are bucketing pages. Because you wanna make sure that the search engine and the user get to see the same thing. So that’s what we focus on with SEO experimentation. It’s like, “Okay, what kind of templates do I have on my website?” And in this case, usually the homepage doesn’t even count, because it’s just one specific template and there are not multiple variations of it. For example, for your product pages, category pages, or anything that has multiple kind of items, it makes a lot of sense to test on them to basically figure out, can I add certain things to these pages, can I remove things, what kind of things should I be optimizing for. So you’d basically split these pages in half, or in like 20% or whatever, and basically measure what the impact is on sessions from organic search, based on the changes that you’ve made there. And that’s your main KPI there.

19:33 TW: So how do you… Somebody, and I’m forgetting who asked the question, but how do you split… For a classic A/B test, you show, ’cause you’re back to users, it’s an A and B split, but how do you actually split? You don’t call up Google and say, “Hey, serve… Have your bot half the time look at this one, and half the other.”

19:51 MS: Yeah, I wish it was indeed that easy. So what you mostly do with SEO is that there’s… Because there are so many different variables, you don’t really split it 50/50. Because it could be that there is a certain outlier, or a certain page like these days for big events or big political things that happen, it might be that there’s one or two pages in that 50% that could totally create an outlier in the data. So with SEO experimentation, you wanna make sure that that doesn’t happen, so you basically create multiple buckets. So how that would work is that you have multiple original variants. So it’s more like 25, 25, 25, 25, or even more of them, so you make sure that there’s an equal divide still between all the variants. That’s how you divide it. It’s like you create multiple…

20:37 TW: But when you say 25, 25, 25, you may take your product details page and say “That’s a template… “

20:42 MS: Yeah.

20:42 TW: So you’re gonna take 25% of them…

20:44 MS: Exactly.

20:44 TW: And do one thing. You’re literally taking your entire bucket of templated content, and you’re tweaking, basically, the template or some aspect of the content on each of those…

20:54 MS: Correct. And you’re basically waiting for a few weeks usually, because you want to make sure that Google picks up on all these changes. And after that, you analyze the results based on outliers or not.

21:05 TW: Awesome.

21:05 MH: Outstanding.

21:07 TW: Thank you.

21:07 MH: Excellent.

[applause]

21:09 MH: Alright. I’m looking for a brave, brave person who’s ready to answer a tough question. Who’s ready?

21:17 S?: Peter.

21:18 MH: Peter? Really? Yes?

21:19 S?: Peter Meyer. He’s…

21:22 MH: He’s hiding. So that means he wants to do it, he’s just afraid to do it.

[applause]

21:26 MH: Peter Meyer.

21:27 S?: [21:27] ____.

21:28 MH: Oh yeah, this is a very good industry analytics question. Welcome, [21:32] ____.

[overlapping conversation]

21:34 MH: Yeah. So you know there is a member of our community that hasn’t been here for a couple years. Yeah, it’s a real tearjerker, this question.

[chuckle]

21:45 MH: What would you like to say to CeeMo to convince him to come to Superweek next year?

21:53 TW: Yeah.

21:54 Peter Meyer: I think I would ask him to bring his family then.

21:58 MH: Yeah!

[applause]

22:00 TW: There you go.

22:01 MH: Yeah. I like it. That’s a good entry. Anything else you wanna say to CeeMo right now?

22:07 PM: I like his stuff…

22:09 MH: Yeah.

[laughter]

22:10 PM: Even though I’m doing Tealium now, but yes, I like his stuff.

22:13 MH: Tealium, Boo!” Oh, no, Tealium, Yay!

22:15 PM: Yeah, yeah yeah.

22:16 MH: Okay. [laughter]

22:17 PM: Just saying.

22:19 MH: Awesome. Awesome. Yeah. CeeMo, we miss you. Come back.

22:24 TW: CeeMo, we are just posters on the wall.

22:25 MH: Bring your family. That’s right. [chuckle]

22:27 TW: You’re awfully two dimensional this year.

22:28 MH: Alright. Thank you, sir.

22:30 PM: Thank you, thank you.

22:31 MH: You are a scholar and a gentleman.

[applause]

22:33 PM: Thank you.

22:33 MH: Alright. Okay. I’ve got another very important question, but this one I know who’s gonna answer it. Robert. Robert Petkovic. Yes, please come up sir. This question needs to be answered.

[applause]

22:51 MH: Yeah. So together, yeah, everybody. Robert Petkovic. Please. Yes. Yes!

[applause]

23:00 Robert Petkovic: [23:00] ____ answers to the previous one…

23:01 MH: Yes, but this one’s a little different. It’s about a metric but not a digital one. So, Tim Wilson’s hair.

[laughter]

23:13 MH: Like about how short should we cut it?

[laughter]

23:18 RP: About the size of that whiskey jar, I suppose.

23:23 MH: Yeah.

23:23 RP: [23:23] ____ be appropriate.

23:26 TW: If I drank everything in that whiskey jar, could you shave my head, is that the question?

23:30 RP: No, no, the size of that whiskey jar.

[overlapping conversation]

23:34 TW: It could, potentially.

23:35 MH: Are you saving it up to donate or something?

23:39 TW: It’s my… I lose all my statistical powers…

[laughter]

23:43 MH: Alright.

23:44 RP: Significantly, or?

[laughter]

23:45 MH: Significantly.

23:46 TW: I’m 95% confident.

23:48 MH: I am uncertain of this. Yeah, so Robert played a really great joke on Tim this week and we wanted to put it in the podcast because it was very good.

23:57 RP: Thank you.

23:57 MH: Yes. Thank you, Robert.

24:00 RP: Everybody knows what the joke was about?

24:00 MH: Yeah, tell. Please tell.

24:02 RP: Yeah. We were discussing with [24:03] ____ before, Tim was concerned whether he would cut his hair. It was he didn’t have time. Should he bring scissors along to Hungary, Superweek. And I asked him…

24:15 TW: This is not true.

24:15 RP: “Should I bring the scissors?” And that did stop. And I came here, and during my… I was driving for seven hours. And at the border, I told myself, “I forgot scissors.”

24:26 MH: They don’t just let you bring scissors into Hungary.

24:28 RP: Yeah…

[laughter]

24:28 RP: Over the border, no.

24:29 MH: That’s the border, yeah.

24:31 RP: And I asked Petra over there, thanks Petra, I asked her to print out scissors. Just find me something on Google images, a scissor, the image of scissors. And she brings it down and says, “Welcome to Superweek.” And I sign it.

24:44 MH: [24:44] ____.

24:46 RP: So that was the joke.

24:46 MK: And that will be in the show notes, an image of that lovely…

[overlapping conversation]

24:51 MH: Awesome. Thank you, sir. Thank you.

[applause]

24:55 TW: Let’s talk about GDPR instead of my hair.

24:56 RP: The best thing…

24:58 MH: One more comment.

25:00 RP: Just one more… The best thing so far, and I think it will be further, is every time Tim Wilson’s hair pops up on the screen. That’s the best part.

[laughter]

25:10 MH: So if you’re still presenting this week, I think you know what you need to do.

[laughter]

25:15 MH: Alright. Who’s got the next question?

25:16 MK: Okay, so we’re gonna get Hussain to come back up. There has been some hectic puzzling going on at Superweek this year. It’s a 5,000 almost cruel puzzle. And over the puzzling, I discovered that Hussain has actually been talking to my…

25:30 MH: Okay, Moe. I know you’re… Great description. So there’s a…

25:33 MK: Oh, man.

25:33 MH: Large jigsaw puzzle. Again, everybody here knows this. For anyone listening, there is a 5,000 piece jigsaw puzzle.

25:38 TW: We have listeners?

25:40 MH: Kind of an awesome conference thing, talks about… It definitely drives conversation. But so puzzling… There’s lots of puzzling going on. We all have listened to Matt…

25:47 MK: I am very passionate about puzzling.

25:49 MH: We have listened to Matt Gershoff speak. Everyone here has puzzled. But jigsaw puzzle, specifically.

25:55 MK: Oh, gotcha.

25:56 MH: Yeah. Just translating, translating for you.

25:58 MK: Okay, got it. Okay, so onto my actual point. Over said jigsaw puzzle, I discovered that Hussain has been chatting to my brother-in-law, Lee Isensee, because he got access to some really cool data. And that data is on all of you.

[laughter]

26:14 MK: So totally GDPR compliant right now. But it’s actually about Measure Slack. So I just wanted to hear if you’ve had any cool findings thus far, since you’ve started digging around.

26:25 HM: Sure. So, just to preface, Lee just mentioned randomly one day on Measure Slack that, “I am logging every message that you’re making on Measure Slack. And it’s in a database on MongoDB. And it’s somewhere in Mixpanel.” So I thought, let’s get access to it and start to play with it. So I had a new intern starting right now, so I just handed him this data and saw… This is a normal community data, you’ll just find nothing important, it will just be similar things. But what I found out was, this is before I came to this conference, what I found out was that analytics people are not really normal. So one of the things that we’re…

[laughter]

27:09 S?: Boo!

27:10 MH: But are we normally distributed?

27:12 HM: Neither normally distributed. So one of the things that we were trying to do was trying to fingerprint where a person might be from, based on what time zones they post, their posts come from. ‘Cause we don’t really have IP address data, we just have what time a person’s posts are. So we were trying to use a radar plot and just see. Most of the time a person would post in an eight-hour window, technically. Because it’s work, and your generally replying to other people during work, to just slack off. But what actually happened was that I found a lot of outliers that there were people that started at 4:00 AM, and then they’re back at it at again 12:00 AM, and back at it again the next day. And there was only three or four hour windows between their messages. And at that point, I was quite surprised. Because what I was expecting was that mostly people would talk within themselves and their groups, but what actually happened was that if you got stuck in a conversation, you were just replying over and over whenever you woke up and your Slack was buzzing.

28:23 MK: So basically we all need to sleep some more.

28:25 HM: Yeah. Please sleep more often to find more insights.

[laughter]

28:28 MH: Thanks.

28:29 MK: Thank you for that.

[applause]

28:30 MH: Oh yeah. So if you didn’t understand anything about that, there is a Measure Slack and you should join it. And in fact, there’s a URL where you can join it at join.measure.chat. So yeah, that’s our little plug for Measure Slack. Alright, we’ve got another very important question. The next person I’d like to get up here to ask a question is none other than Rafael Calgaro from Montreal. Let’s give him a hand.

[applause]

29:02 Rafael Calgaro: Thanks, Michael.

29:03 MH: So this Superweek always has a French-Canadian.

29:07 RC: Yeah.

29:07 MH: But not always the same one.

29:09 RC: No.

29:10 MH: But what I would like to ask you initially is WWSHD? Or “What would Stephan Hamel do?”

[laughter]

29:19 RC: Same thing as Yoda.

29:20 MH: I’m just kidding. Okay, so this is my question for you, sir. By the way, this young man, this fine practitioner of analytics brought an amazing bottle of Canadian whiskey, which I believe we’ve all consumed. It was really good maple whiskey.

[applause]

29:34 MH: Thank you so much for bringing it.

29:36 RC: [29:36] ____ pleasure.

29:36 MH: What has been the highlight of Superweek for you, thus far?

29:41 RC: Can I do a plus one here?

29:42 MH: Yeah.

29:43 RC: I’ve got two. I’ve got a shallow one and a deep one.

29:45 MH: Ooh. Deep.

29:47 RC: Should I start with the shallow or the deep?

29:49 MH: Yeah, probably…

[laughter]

29:50 MK: He really wants that prize. Yeah.

29:52 MH: Yeah. Well, no, that’s only the people who come up before they know. He’s… Oh, yeah. It’s okay, go.

[overlapping conversation]

[laughter]

30:00 RC: Alright, so the shallow one. There’s a bus that can take you to Superweek. And on the bus, there’s this fine blond lady that comes in and when I ask her her name, she said she is Moe. And on the show notes, she’s not a blond. So that’s the first thing that really amazes me.

[laughter]

30:18 MH: Wow. When you said shallow, this is like…

[laughter]

30:22 MH: This is like you’re not even getting your ankles wet [30:23] ____.

30:23 RC: I’m talking about a lady’s hair color.

30:25 MH: Yeah.

30:25 RC: That’s…

30:25 MK: Are we talking about the picture where my hair is purple?

30:28 RC: Sort of, yeah.

[laughter]

30:29 MK: Okay.

[overlapping conversation]

30:32 RC: Color scheme on the website, guys.

30:33 MH: Yeah. It’s an artistic choice. Come on.

30:36 RC: Of who?

30:37 MH: Yeah, okay. Of Michael.

[chuckle]

30:40 RC: The other one, the deeper one would be the talk by Stein, Stein Rasmussen. His main point was about stop asking stupid questions about your KPI and start asking real questions. So he said you should not ask about KPI, you should ask about KPQ which is Key Performance Question. And from those KPQ, technically, you should get your KPI from.

31:06 MH: Awesome.

31:07 S1: Does that make sense?

31:07 MH: Yeah, very much. Thank you very much. Thank you, Stein. Stein!

[applause]

31:12 MH: Thank you, Rafael.

[applause]

31:14 MH: Alright. Alright. Alright.

31:18 MK: And I just wanna clarify quickly that we had a lengthy conversation on the bus about the color scheme of the Analytics Hour webpage. If anyone has any feedback on that, please send it to Tim. He’d love to hear your thoughts.

[laughter]

31:33 MH: He loves bleeding blue.

31:34 TW: I have blue skin on the website. I’ll be emailing myself profusely.

[laughter]

31:41 TW: Mariia, if you can come up.

31:43 S?: Mariia.

31:44 TW: And this is where I… I probably already butchered your first name, is it Bocheva?

31:48 Mariia Bocheva: Yeah.

31:48 TW: Oh. I guess I could give you the…

31:50 MB: Sure.

31:50 TW: So Mariia’s from OWOX BI?

31:54 MB: Right.

31:54 TW: OWOX BI. OWOX.com. So we pride ourselves on the podcast that we don’t shill for products, we don’t push products. So this was not Mariia’s doing. This just came up ’cause we were talking in the… While we were eating, and she brought this up. She was like, “Oh, we do this cool thing,” and I’m like, “Really? That’s pretty cool,” so I went and checked it out. So, as we have talked about, machine learning and AI and doing more advanced stuff with statistics, it came in the first session that wasn’t about GDPR. There was a “Hey, to do this stuff, you have to have detailed data. So if you’re using Google, you want your data in BigQuery or you wanna be doing a custom dimension that’s capturing an ID.” And so we talked through, there were various options, some of them very technical, require a lot of work, using the Google Cloud platform. And it turns out that OWOX PI has, as part of their… Damn it, pipeline product?

32:46 MB: Right. You got it. [chuckle]

32:46 TW: Okay. Yeah, there’s kind of a solution for that. So I wanted her to actually share with everyone here who was maybe in one of those sessions, saying, “What other options do I have,” to explain what that tool does.

32:58 MB: Yeah, I’ll gladly explain. The main idea there is that if you’re using Google Tag Manager, you can just set up one custom HTML tag or a custom task, to capture data that you are sending from a website into Google Analytics, and we’ll copy that request and we’ll push it into BigQuery. So you’ll have your raw data from the website on a hit level in BigQuery, without doing 360. And that doesn’t mean that supplements 360, ’cause 360 has a bunch of other cool features. But if you are not big enough to go with 360 and you want to get raw data in BigQuery and start doing stuff with it, without technical implementation, without writing your own connector. You can just go there, add one custom HTML tag, and get your data in BigQuery running. And if you don’t use Google Tag Manager then you’ll have to add JavaScript code on the website. But I think everyone here has GTM.

[laughter]

34:00 MB: I hope.

34:02 TW: Awesome. So I thought that was pretty cool, right? So, like, round of applause.

[applause]

34:06 MH: Yeah!

34:07 TW: Awesome. Thank you.

34:09 MB: Something to add. If you have any questions on how to set up, I’m here tomorrow and I can help you with that.

34:15 TW: And for those who aren’t here, we will link to it on our show notes page, so it will be available if you wanna go check it out more.

34:18 MH: Yes. Absolutely.

[applause]

34:22 MH: Alright. So you know that the questions just keep getting harder and harder. Alright. Who’s ready? Who’s willing? Who’s gonna be able to answer this next very difficult question?

34:37 MK: I think you need to make it clear you need a volunteer.

34:39 MH: I need a volunteer.

[chuckle]

34:40 MH: I need a volunteer, yeah.

34:42 TW: [34:42] ____.

34:43 MH: Or I’ll start choosing people out of the audience.

34:46 S?: David McBride.

34:47 S?: Oh.

34:48 MH: Oh! I see a hand up. Charles Farina!

34:51 S?: Oh, [34:52] ____. There we go.

34:52 MH: Oh, Charles!

[applause]

34:54 MH: Charles Farina’s hand was up. Great!

34:55 TW: Oh, this is gonna be a good one.

34:58 MH: Alright. This is great question for you, Charles. Alright, here we go. Hand this man a microphone. Yeah.

35:04 Charles Farina: [35:04] ____ sponsorship?

35:07 MH: Yeah. Charles is with Analytics Pros, who is a sponsor of Superweek. We’re so excited to have you here.

[applause]

35:14 CF: Thank you.

35:14 MH: Yeah. Alright. Whose job is it to look after data quality? The analyst, or the implementation specialist, the data engineer? Whose job?

35:28 CF: All of us. We’re all in it together.

35:29 S?: Yeah!

[applause]

35:30 MH: Oh, boy. Very political, very political.

35:36 CF: But seriously, all of us.

35:37 MH: But seriously.

35:37 CF: If you touch the code, you have to check it. If you’re analyzing the code, you have to be confident in it. So no matter what your role or responsibility is, in order to have the trust, you need to have a team and data quality and standards and all that stuff.

35:53 MH: Alright.

35:54 MK: But so just… I’ve got a follow-up.

[overlapping conversation]

35:56 MH: Follow-up question.

35:56 MK: But sometimes when something is everyone’s responsibility, it means no one takes responsibility for it. So is there a particular role that maybe the buck stops with? As in, ultimately it’s their job to call it… I don’t know, I feel like when you just say it’s everyone’s job… Well, I know my data engineers, they’re like, “That is your job, you’re the analyst.”

36:18 CF: Sure. I think it flows nicely into tag management workflows. So let’s say you have a tagging request for marketing. Usually you have a strategist who should be able to communicate that or create a ticket, down to development. And when you get into the tag management process, you have your engineer who goes in and creates the tag. They should be able to know how to open up the data layer and see if a tag’s firing. Then, before that gets published, it should go back to the reporting person to make sure it’s in the format, and that the style is meeting the needs they had anticipated. And that way, everything gets eyes from both stakeholders before it goes live. And more importantly, it needs to be a process, because a site is never finished. It’s ongoing, it changes. And when things do break, it’s not about pointing fingers, it’s about just trying to have the best quality and data integrity you can. ‘Cause without that, none of us can really make confident decisions in the data.

37:15 TW: I have to jump in. There is such a universal thing going on right now with this question, and Charles is answering it. So everybody here who was at the Golden Punchcard Awards know that Charles and Analytics Pros was a finalist, because they’ve got a real-time alerts, they’ve hooked into the Realtime API for GA. And one of the examples you gave, which I think would probably be the role of an analyst, would say “Hey, one of the alerts you should set up is: Did my traffic drop below a certain level?” That will tell me that something has gone wrong, fundamentally with my tagging. All you here in the room know about that. What’s not known is that one of the co-hosts of the podcast, who I’m not gonna name, but everybody here can tell who it is based on that co-host’s performance behavior right now…

[laughter]

37:58 TW: Was actually checking a website and checking the traffic, and had saw a flatline that happened due to a data layer change within the last 24 hours. So…

38:07 MH: It’s real. It’s real.

38:08 TW: It’s real, the struggle is real.

38:10 CF: Whose fault is it?

[laughter]

[overlapping conversation]

38:12 TW: That’s what we’re trying to figure out.

38:13 MH: Whose head rolled?

38:15 TW: That’s right.

38:16 MH: So, based on your answer, somebody’s getting fired.

[laughter]

38:19 MH: No, I’m just kidding.

38:21 CF: Or a haircut.

[laughter]

38:23 S?: Whoa!

38:26 TW: That’s not corporate policy, but if you’re gonna shave, maybe we’ll talk.

38:30 MH: Alright.

38:30 S?: Oh!

38:31 MH: Oh. Alright. Thank you, sir. Alright.

[applause]

38:37 MH: Our next person has worked so hard. He works very hard, and we want… We need… We require of him just one more service. Yehoshua, could you join us for just a second?

[applause]

38:53 MH: The hardest working man in the analytics business. Alright, Moe’s got a question for you.

38:58 MK: So to the question…

39:00 Yehoshua Coren: Oh, thank you.

[laughter]

39:00 MK: We’ve got someone in the audience, AKA Krista from Google, who I’m sure is sitting there with a notepad, ready to take any feedback.

39:11 MH: Question, now.

39:11 MK: My question is, given that Krista’s listening attentively, if you could change one thing about Google Analytics, what would it be?

[laughter]

39:21 TW: Oh.

[laughter]

39:24 TW: Just one?

39:25 MH: He’s about to go Super Saiyan, everybody.

[laughter]

39:30 MK: I think he’s warming up.

39:33 YC: The ability to back stitch user data.

39:36 MH: Oh. Good request.

39:38 TW: The ability to back-stitch user data.

39:39 YC: The ability to back-stitch user data, because a cookie ID becomes aware and we know who the person was. You could do it in BigQuery, but that’s not Google Analytics.

39:53 MH: I think she just wrote that down.

[laughter]

39:55 Krista Seiden: There’s a follow-up to that.

39:57 S?: Oh!

39:58 MH: Get her the microphone.

39:58 S?: Oh! [40:00] ____.

40:00 MH: Oh! You sprung back to life.

[laughter]

40:04 KS: So, a follow-up. That tends to be really expensive because you’re talking about reprocessing data. So if we can go back in time and ask you one thing that wouldn’t require reprocessing, but would make Google Analytics better… [40:17] ____?

[laughter]

40:17 TW: Wow. And how a product manager handles feedback. Redirect!

40:20 KS: Yeah. And that’s how you redirect a question.

[laughter]

40:27 MH: The question has been asked. I don’t know what you want to do.

40:29 TW: Actually… Is anybody dying to provide an answer to that question? Oh, oh, look. Oh, Charles, we got Charles… Oh. It’s gonna be the… It’s the page dimension.

[overlapping conversation]

40:39 MH: Here comes a new challenger.

40:41 CF: For the love of all things GDPR, please put the page dimension first in the list.

[laughter]

40:49 CF: It’s so simple!

40:53 MH: And there goes any chance of sponsorship by Google, I guess.

[laughter]

40:58 S?: We’re done.

41:00 TW: Alright!

41:00 MH: Thank you, sir.

41:01 CF: Ladies and gentleman, Moe! I’m giving up the microphone.

41:03 MH: Alright. Thank you, sir. He works hard. And now, if you come back, David McBride, if you come back next year, you know what you’re in for. So, yeah, be ready.

[laughter]

41:14 MH: Alright. I’ve got a question for a one Mr. Erik Driessen.

[applause]

41:20 MH: Could you please join us up here, Erik? Welcome, welcome. Thank you for coming. Alright. You were one of the Golden Punchcard finalists, and I’m gonna tell you, I think my theme song would be “Staying Alive.”

41:33 Erik Driessen: Nice.

41:34 MH: I enter the office, “You can tell by the way that I walk… “

[laughter]

41:38 ED: Wow, that’s a good choice.

[overlapping conversation]

41:40 TW: Is this what happens when you stand next to [41:41] ____?

41:41 MH: I have thought of this idea many times, actually. This is something I’ve wanted to do. And we use our Raspberry Pi to measure how much beer we pour out of our kegerator.

[laughter]

41:49 ED: Oh, that’s awesome.

41:50 MH: And we have a Twitter account, @kegalytics. And it’s hooked up to GA…

41:55 TW: Both of our listeners are wondering what the fuck you are talking about right now.

41:57 MH: Yeah. At Search Discovery, we measure our beer. But I have a question for you. I was intrigued, how you had the insight that you can totally change the perception of data collection sometimes by adding a benefit to the data subject. Can you recap what you did there?

42:15 ED: Yeah. So basically we have a project where we do WiFi tracking, so we track when people enter the office. And most of the people don’t really like being tracked when they enter the office, so we thought about ways to add a beneficial effect to being tracked. So everyone who was in the system, they get their personal intro song played whenever they enter the office. So every time I go to the office, I personally hear the Arrow theme song, some of you might know the song. So that gives everyone who enters the office basically a happy face, so that’s really good for tracking. And I think, in our day-to-day business, we mainly use tracking and the user will benefit from it somewhere in the end, when we have a complete model that attributes all the user and cross device tracking and stuff like that. We just flipped it and thought about ways to have the user benefit from it instantly, as soon as they’re being tracked.

43:04 MH: Very nice.

43:05 ED: And I wanted to add to this, I got some questions about will we share the code? We’re gonna put the code on GitHub and share the hardware requirements. So everyone can run this in their own office if they want to.

[applause]

43:14 MH: My dream will finally come true! Perfect, excellent. Thank you, Erik.

43:18 ED: Thanks.

43:19 MH: Thank you so much.

43:21 TW: I love that, because I feel like we talk about that at times, where we’re like, “Oh, if you’re collecting data, what’s the benefit to the user?” And that little example, it was like, “Yeah, I don’t want… “

43:31 MH: Such a good example.

43:32 TW: “I don’t want my boss to know when I show up to the office.

[laughter]

43:37 TW: Even though he would be very cool with it. Right, Michael?

43:40 MH: Yeah. Production over presence, Tim.

[chuckle]

43:42 TW: That’s right. Still onboarding. So we’re gonna bring up a long-time friend of the show, Mr. Pawel Kapuscinski. Where is he? Yeah, there he is.

43:53 MH: Yay! Pawel!

[applause]

43:55 TW: I will say… So definitely I feel like I know, Pawel, I know you very well, even though we literally met for the first time seven hours ago maybe, in person. So Pawel’s very active on the Measure Slack. He is tinkering around with stuff. He’s a big sports fan, so he is finding all sorts of things to do with various football data.

44:19 Pawel Kapuscinski: Football, basketball.

44:20 TW: Football, basketball.

44:21 PK: Soccer, whatever you want.

44:23 TW: Soccer. What is this soccer you speak of?

[laughter]

44:27 TW: We’re not talking American Football, right? You haven’t done American football?

44:29 MH: Yeah, American football too.

[overlapping conversation]

44:31 TW: Oh wow.

44:32 MH: Pawel’s one of us.

44:32 MK: You could try Australian Football too. That’d be alright.

44:34 PK: I couldn’t find data. But if you find me data, I can do stuff.

44:38 MK: We can talk about this after.

44:39 PK: Okay.

44:39 TW: Okay. We could do it right now. Yehoshua and Krista have gone off to argue about reprocessing and…

[laughter]

44:47 TW: That’s not true. It’s the medium. See, I can say things like that. Creating a different experience.

44:53 S?: [44:53] ____.

44:54 TW: So Pawel did something really cool. We didn’t start transcribing our episodes until episode 60. And we knew that we could do some machine translation and that would be relatively quick and relatively inexpensive. We hadn’t gotten around to it but… Pawel, you actually, we didn’t know until you’d done it, but you actually did some text mining and posted the script of the transcripts of our episode, which then had me scramble to go and back-populate all the past transcripts. But for the stuff that you’ve processed so far, what’s one or two nuggets of interesting things you found from mining the text from this podcast?

45:33 PK: I think the most obvious one, You got Matt Gershoffed, when he came to the podcast, and you said AI and machine learning during the show three times more than the next show in the line. So you said I think 39 times, on the next show, the second time, you said… It was just 15 times. So you were talking a lot obviously, yeah.

45:54 S?: [45:54] ____.

45:54 MH: That’s why AI and machine learning are very important.

45:58 MK: And also if you can hear any rumblings…

46:00 MH: Time for the word count.

46:01 MK: Throughout this show, it’s someone being Matt Gershoffed at the bar, throughout the entire show.

[laughter]

46:09 TW: Which I can attest that it does help to have… Be close to the bar when you’re being Matt Gershoffed. Poor Matt. That wasn’t mean, that was said with tenderness.

46:18 PK: Second would be, that podcast is definitely not for children.

46:22 TW: It’s not…

[laughter]

46:23 PK: You like to swear.

46:24 TW: The fuck you say?

[laughter]

46:28 TW: Yeah. Interestingly, there are more kids around right now [46:30] ____. Sorry, kids. Sorry.

46:36 PK: I hope it’s not about me.

46:37 TW: No. [chuckle] Awesome. That’s great.

46:40 PK: And the one more really interesting, the two most useful words… The most used words at the podcast, one is data, which is we love, and second is people, that we hate.

[laughter]

46:50 TW: Oh. And data…

46:51 MH: ‘Cause we are data people.

46:53 TW: Awesome. So thanks, and Pawel…

46:56 MH: Thank you, Pawel!

[applause]

47:00 TW: Pawel did post that code on GitHub. I plan to play around with it. If anybody’s dabbling with, or wants to dabble with text mining, you have a ready dataset of some messy data. I certainly plan to play around with it. So that was kinda cool and fun.

47:13 MH: Alright, and our final entrant.

47:16 MK: Before I do that, I wanna give out the prize. I’d really like to give out the prize.

47:20 MH: Oh, you wanna give…

47:21 MK: And I feel like maybe I should just get to pick it on my own.

47:23 MH: Yeah, yeah. Absolutely.

47:25 MK: And it should just be anyone who talked tonight.

47:25 TW: Yes, ma’am.

47:27 MK: Because…

47:29 MH: Is it me?

47:31 MK: Well, there’s two things. So one, tomorrow I’m actually talking about this particular bias, which Tim reminded me of, which is recency, which is really unfortunate for what I wanna do with the prize right now. But I really wanna give it to Pawel. So I’m just making the call.

[laughter]

47:47 MH: What! Thank you, Pawel. The Kaylee Rogers Commemorative Award.

47:54 MK: And if you wanna find out about recency bias, come along tomorrow.

[laughter]

47:58 MK: Okay, so the last question is actually for everyone in the audience to participate. And we’re gonna use last clap attribution.

48:07 MH: Oh, wow, alright. It’s a proven method.

48:08 MK: So, there has been three really big topics at Superweek. And what we wanna hear from you guys, is which one has been most terrifying. So the first topic… And maybe, I don’t know, maybe you can clap for all…

48:23 TW: Don’t you have to say what all three are before they clap, or…

48:25 MH: Yeah.

48:26 TW: I’m just checking.

48:26 MK: Okay, fine. So the first one is GDPR. The second one is Tim’s luscious locks.

48:35 S?: Ooh!

[applause]

48:36 MH: Oh. Yeah.

48:36 MK: [48:36] ____ And then of course third is machine learning and AI.

48:41 S?: Whoo!

48:41 MH: Ooh. Yeah, that’s good too. But it’s which one’s most terrifying?

48:45 MK: Yeah, most terrifying.

48:45 MH: Yeah. The most terrifying.

48:46 MK: So number one, GDPR.

48:49 MH: GDPR…

[applause]

48:50 MH: It’s pretty scary, pretty scary. It’s pretty good. That’s pretty good.

48:53 MK: Number two, Tim’s luscious locks.

[applause]

49:07 MH: Oh yeah! It’s a standing ovation! Oh my gosh! Tim’s locks.

[laughter]

49:19 MK: I’m not gonna go on to three.

[laughter]

49:23 MH: Okay. This is the serious part of the show because now we’re talking to everyone who’s not here, out there. If you’ve been listening and you’re like, “Why am I not at Superweek having a good time with all of these people?” You’re probably saying, “How do I connect? How do I get into this?” The best way is through the Measure Slack, through our Facebook page, through our website at AnalyticsHour.io. Please let us know about your interest in Superweek, we’ll connect you with Zoley. You’ll get here next year, be part of this amazing experience. We’ve loved being here. We thank all of you for letting us be here. And we encourage anybody listening to subscribe to the show, through whatever medium you use, on iTunes or Android or whatever. We like subscribers. And we have a last minute question from Jim Stern. Oh, I know what question it is.

50:29 TW: [50:29] ____ question.

50:31 MH: I know what question it is. And I have a really great…

50:32 JS: My question is, when’s the next time these fine people can see you do a podcast live?

50:39 MH: I love that question, because the next time you’re gonna see us live is at the Marketing Evolution Experience in Las Vegas, in June 2018, with Zoley and Yehoshua coming with the Golden Punchcard. That is a not to be missed, so get there.

51:00 MK: And we’re gonna be trialling something completely new and different for our next live audience show.

51:08 TW: Which we don’t know what that is yet.

[laughter]

51:10 MH: We have no idea of what that is.

51:12 TW: Just clarifying.

51:13 MH: So for my two co-hosts, Tim and Moe, and for all of our guests, remember, keep analyzing.

[applause]

51:24 S?: Yeah! Oh, yeah!

[music]

51:32 S1: Thanks for listening. And don’t forget to join the conversation on Facebook, Twitter, or Measure Slack group. We welcome your comments and questions. Visit us on the web at AnalyticsHour.io, Facebook.com/AnalyticsHour, or @AnalyticsHour on Twitter.

[music]

51:52 S?: So smart guys want to fit in so they made up a term called Analytics. Analytics don’t work.

52:00 MH: Dum, dum, Superweek. Dum, dum, dum, Superweek. Dum, dum, Superweek. Dum, dum, dum, Superweek.

52:09 MH: Can you please just punch me in the jaw?

[laughter]

52:13 MH: ‘Cause it’s just it’s open.

[laughter]

52:15 MK: Oh. Oh. So is my bar tab.

52:19 S?: Oh! Ooh!

52:20 S?: 306.

52:21 MK: That was a joke [52:22] ____.

52:22 S?: Shots fired.

[laughter]

[overlapping conversation]

52:24 S?: 306, everybody.

52:26 S?: Moe might have gone and checked to confirm that no people had not actually been ordering drinks on her room.

52:33 S?: Hello. Hello, Krista. Hello, raise your hand, in the back. We can’t see you on the podcast. [52:40] ____.

52:44 TW: The MC has been holding court quite a bit this conference.

52:47 S?: Yeah.

[laughter]

52:48 TW: [52:48] ____ is very used to holding the microphone.

[laughter]

[overlapping conversation]

52:52 S?: Yeah. Alright.

52:55 MH: Let me hand this over to Moe. This is a part where we’re gonna edit the whole thing out. You’ll never hear this part.

53:02 MK: Don’t give me whiskey. Normally, I can’t drink during the show ’cause we record at like eight in the morning.

[laughter]

[overlapping conversation]

53:10 S?: Analytics don’t work!

53:11 MH: Analytics don’t work. Bunch of nerds.

53:13 MK: You should see them when I bring the questions out. There was…

53:15 S?: I saw that, I was like, “Oh… “

[applause]

53:20 MH: [53:20] ____ Superweek. Yeah.

[music]

Subscribe: RSS